Copyright @ 2025 Yee Shian Lee. All Rights Reserved. No part of this publication may be reproduced without prior written permission except for brief quotations in reviews or scholarly work.

The UX Shift

Many of the organizations I had the privilege to work with are keen to innovate with AI Agents, build software solutions with multi-agents interacting together, to bring the best outcome for their customers. One thing stops short; they are still building software experiences of the past. If you are vibe coding, it is likely GenAI will produce the same results because the data and user interfaces they were trained on are built for non-agentic experiences.

When multi-touch interaction was launched with the smart phone, many designed software for single touch and tap, just like how we use the mouse, with single click and double-clicks. “Angry Birds” was one example of an application that broke through the limits, where the game was redesigned around the multi-touch capability, such as finger swipe motion to manipulate the game characters.

Here, we are at the same phase for Agentic Experiences. Designing solutions for Agentic Era requires understanding the technical possibilities and limitations, while breaking-through the norms of software interaction. Let’s start with examining the ROI of re-designing user experiences for Agentic solutions.

ROI for Agentic Experiences

In the past, ROI for user experience was typically measured by user satisfaction scores, efficiency gains, and engagement metrics like page views or time on site. These metrics focused on how users interacted with screens and navigated workflows.

Being in the consumer market for two decades, the user experience defines the success of the product, and thus the success of the business. User experience shape how technology works for people, and the 1-2 winners in the market had the most innovative user experiences, agreeable value with consumers, built on ground-breaking technology platforms.

In the agentic era, where AI agents orchestrate and plan, the focus shifts from screen activity to real business results. ROI would be measured by how effectively agents complete tasks, resolve issues, and deliver results that matter such as faster time-to-resolution, higher accuracy, policy compliance, and cost efficiency. Leaders track not just how users feel, but how reliably and safely agents deliver on business value.

This evolution means organizations can prove the value of Agentic Solutions with evidence: completed workflows, reduced manual effort, improved compliance, and transparent operations. ROI is grounded in success metrics you can review.

Designing AI Agents to achieve User Goals

A foundational principle in user experience is that systems should be designed around real user goals and measured by how efficiently those goals are achieved. In the context of Agentic UX, this means moving beyond feature lists and focusing on what users want to accomplish. Cataloging user goals and measuring the time it takes to complete tasks are critical practices for ensuring that AI agents deliver tangible, measurable impact. By grounding agent design in these usability principles, teams can create experiences that are not only intelligent but also purposeful and efficient.

- Catalog User Goals. The first step is to systematically catalog user goals. This involves engaging with users to understand their true objectives, what they are trying to achieve, the pain points they encounter, and what matter most. Users have to envision beyond their day-to-day job, their individual job scopes, to understand business process flow where Agentic AI can be applied. For example, ask 5 Whys to uncover the true objectives, before building more dashboards and reports, because while these visualizations are important and inform us, we need to understand the broader workflow to enable Agents to act on our behalf. These user goals should be documented and prioritized based on frequency, business value, and user impact. For AI agents, each goal should be translated into a set of clear, actionable tasks that multi-agents can perform autonomously or in collaboration with the user. Maintaining a living catalog of these goals ensures that agent development remains aligned with user needs and organizational priorities.

- Measure and Evaluate AI Agents. Once user goals are defined, it is essential to measure how effectively the agent helps users achieve them. Instrumenting agents to log key usability metrics, such as time to complete each task, success rates, and points of friction, provides actionable data for continuous improvement. Evaluations such as task adherence, error rate, these play a crucial role here, enabling teams to systematically assess agent performance against these metrics. For example, tracking the average time required to submit an expense report or resolve a support ticket using Microsoft Foundry’s evaluation tools can reveal bottlenecks and opportunities for streamlining agent workflows. Monitoring where users abandon tasks or encounter errors helps teams identify and address usability gaps. These insights inform design patterns that optimize agent flows for both novice and expert users. For new users, agents can provide step-by-step guidance and contextual help, while experienced users benefit from shortcuts and natural language commands that accelerate task completion. By designing for flexibility and efficiency, agents can adapt to varying user skill levels and preferences, ensuring that everyone can achieve their goals with minimal friction.

- Transparency and feedback are equally important. Users should always know where they are in a process, what the agent is doing on their behalf, and what steps remain. Progress indicators, clear explanations of agent actions, and timely feedback empower users to steer or intervene as needed. Making agent operations visible not only enhances user confidence but also supports compliance in enterprise settings.

- Continuous improvement is driven by systematically tracking usability metrics, such as success rates, time on task, error frequency, and user satisfaction using evaluation dashboards and reporting tools. Regularly reviewing these metrics enables teams to iterate on agent design, prioritize enhancements, and demonstrate measurable impact to stakeholders. This evidence-based approach ensures that Agentic UX evolves in response to real-world usage and delivers sustained value over time.

By embedding these usability practices and evaluations into Agentic UX, organizations can ensure that their AI agents are not only innovative but also truly impactful, delivering outcomes that matter, efficiently and transparently.

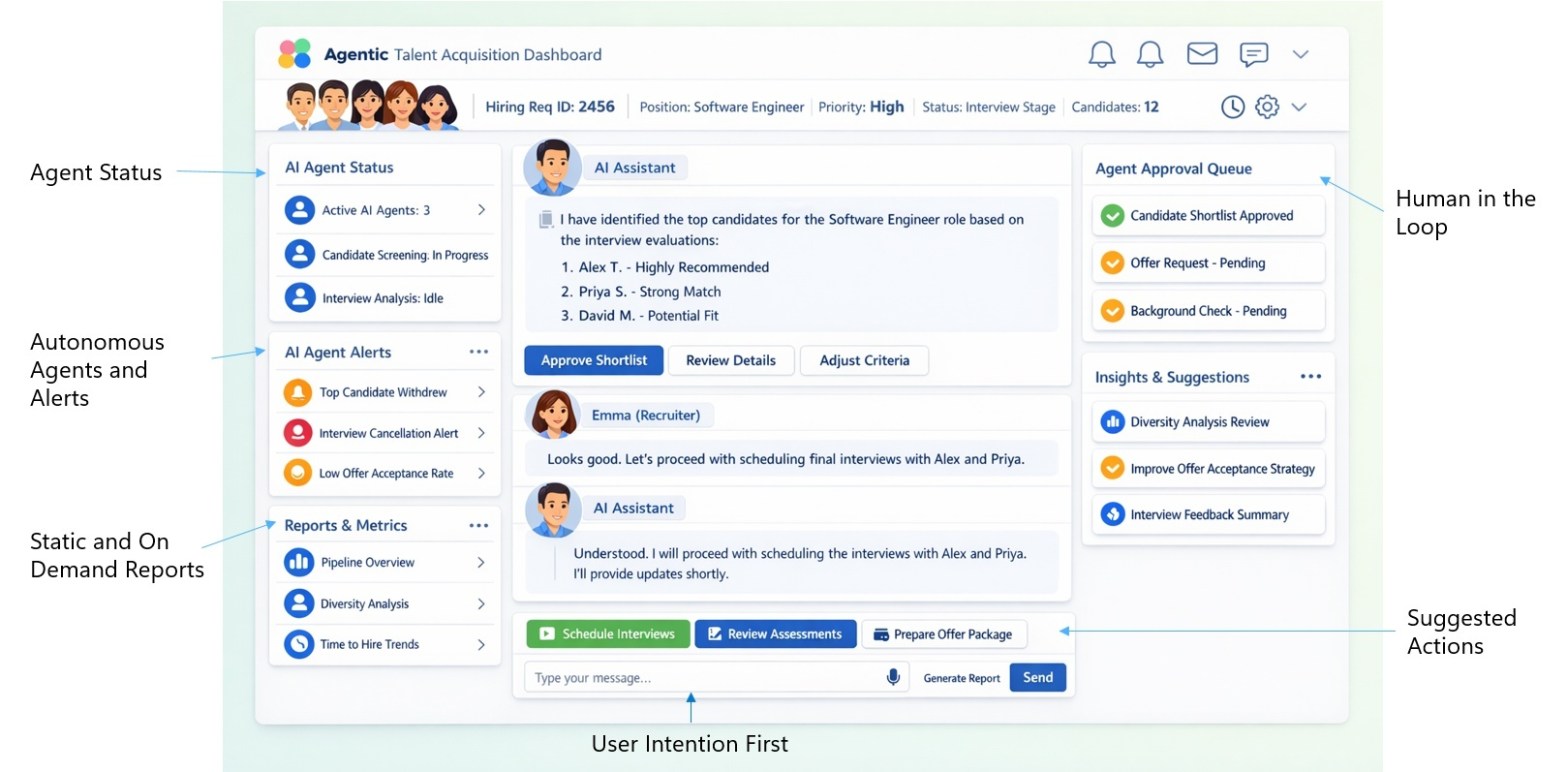

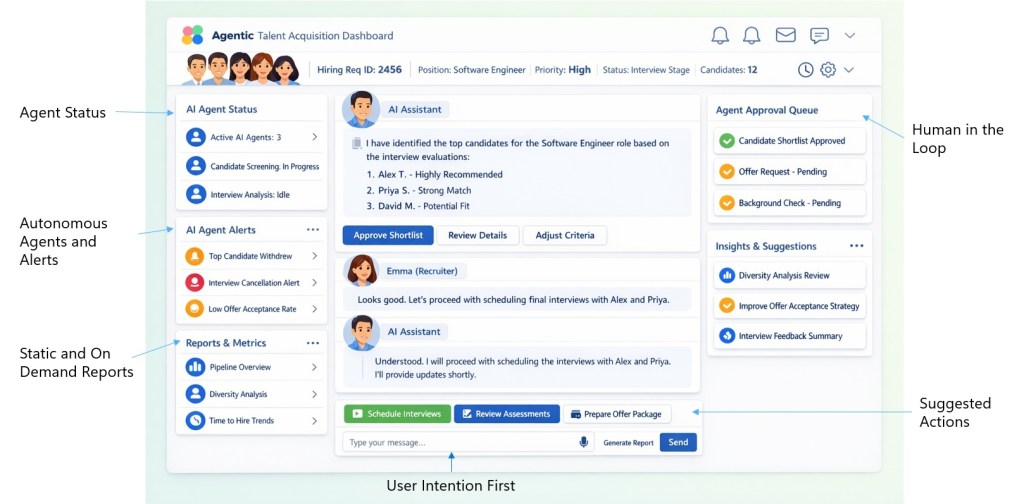

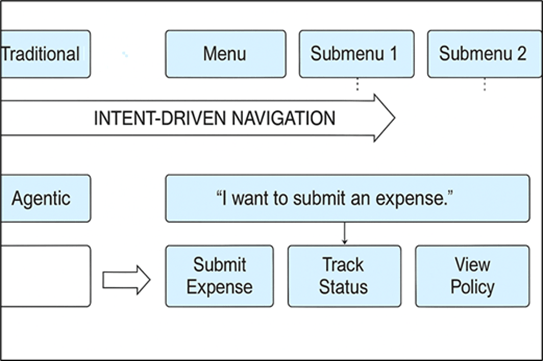

Evolving the Information Architecture with Intent-Driven Navigation

Traditional navigation relies on fixed, hierarchical menus that force users to learn where things are. In an agentic experience, this changes dramatically. While menus and static screens will have their use for frequently used tasks, users can now simply express their intent, “I want to submit an expense” or “Show me last month’s reports” and the agent dynamically surfaces the most relevant actions and next steps. Behind the scenes, this is powered by a catalog that maps user intents to available actions. Rather than overwhelming users with choices, the agent presents contextual prompts like, “Would you like to attach a receipt or view policy?” This approach eliminates friction and makes the experience feel natural and adaptive.

Conversational Menus and Progressive Disclosure

Menus are no longer static lists; they become conversational and adaptive. Agents can offer quick actions, sometimes called “suggested actions”, within chat, voice, or UI cards. For example, after a user asks about expenses, the agent might display options like [Submit Expense] [View Policy] [Track Status].

Progressive disclosure must be intentionally designed into agentic systems, just as thoughtfully as information architecture. It ensures simplicity: advanced options only appear when relevant, keeping the experience streamlined for most users while still offering depth for experts. This design principle balances clarity with power, making complex workflows approachable.

This progression is not static; it should be captured from the end user’s perspective, encompassing the entire user journey, underlying workflows, company policies, and even nuanced business logic. All these elements should be systematically added to the knowledge bases that AI agents rely on. In practice, this step is often overlooked by both designers and engineers, as they may assume that large language models can infer or generate the right answers without explicit, proprietary information. However, advancing the capabilities of AI agents depends on providing them with the right context and domain-specific knowledge up front. Without this foundation, even the most sophisticated models will struggle to complete the tasks users have in mind, leading to gaps in experience and missed business outcomes. Thoughtful progressive disclosure, grounded in real organizational knowledge, is essential for building agentic systems that are both effective and trustworthy.

Dissolving Information Architecture into Agentic Workflows

Rather than treating information architecture as static classification of information and capabilities, the agentic era dissolves it into a living workflow knowledgebase that is deeply embedded within the agent’s operational context. Here, knowledge is woven directly into user conversations, surfaced through suggested actions, and revealed progressively as users advance through their tasks. This dynamic approach supports discovery and effectiveness by ensuring that relevant information, policies, and business logic are contextually available at each step. As users interact, the agent draws from its workflow knowledgebase to guide, clarify, and adapt, making every suggestion, prompt, or disclosure purposeful and timely. By embedding information architecture into the flow of work and conversation, organizations empower agents to deliver more intuitive, discoverable, and outcome-driven experiences.

For example, consider an AI-powered expense submission agent. The team catalogs user goals such as “submit an expense,” “check status,” and “correct a mistake.” The agent is instrumented to log the time taken for each submission, track completion rates, and capture points where users need assistance. Design patterns include both a guided, step-by-step flow for first-time users and a quick-submit option for repeat users. Progress is clearly displayed (“Step 2 of 3: Upload receipts”), and the agent explains each action (“I’m routing your report for approval now”). Metrics are reviewed regularly to identify areas for improvement and to ensure that the agent continues to meet user needs efficiently.

Contextual Help and Explainability

Help is no longer buried in documentation; it appears exactly when users need it. Agents surface contextual guidance like, “You can ask me to submit expenses, track status, or view policy. Here’s what each option does…” Explainability is built in: every recommendation or action can be questioned with a simple “Why?” dialog, revealing the reasoning and linking to relevant policies or documentation.

Adaptive Menus Across Modalities

Menus can adapt to the channel and modality. In chat, users see quick reply to buttons or suggested intents. In voice, the agent offers spoken prompts like, “You can say ‘submit expense’ or ‘track status.’” In graphical interfaces, dynamic generated cards or panels update based on context. This flexibility ensures consistency across experiences.

Governance, Safety, and Personalization

Every option presented by the agent respects governance and safety rules. Menus are filtered by user role, permissions, and policy, ensuring compliance and reducing risk. For example, for expense approval options should only surface on the UI to the roles with permission. Personalization adds another layer: the agent remembers frequent actions and surfaces them first, “Welcome back! Ready to submit another expense?” This combination of safety and personalization makes the experience both secure and delightful.

Example: Agentic Menu Flow

User: “I need help with expenses.”

Agent: “I can help you with these options: [Submit Expense] [Track Status] [View Policy]. Which would you like to do?”

User: “Submit expense.”

Agent: “Great! Please upload your receipt or enter the amount. Need help? [View Policy]”

Evolving Design Thinking for Agentic Workflows

During a Design Thinking session, facilitators would help teams systematically explore and identify AI use cases and agentic workflows within existing user journeys. A few pointers to gather during Design Thinking sessions would be the user’s view of AI enabled workflows, automation tasks, AI roles and responsibilities, and human review and approval steps. This approach ensures that Agentic Automation is not random but rooted in real user needs and goals. The process supports rapid prototyping, prioritization, and alignment with human-centered design such as viability and feasibility, improving the success rate of Agentic AI projects and making every agentic capability impactful.

Next Steps

We are in a paradigm shift where technology and AI capabilities are changing rapidly. Full agentic systems require consideration from many different aspects, and I have written the end-to-end guide for architecting Agentic Experiences; you may learn more practical concepts and solution ideas from my book: Amazon.com: Architecting Impact: Agentic UX and the Future of Cloud Design.

What are your thoughts on this topic?